If you never heard of Kafka, this is not for you. The short paragraph won't repeat the concept of a messaging system and its components, internal as well as external.

Kafka has been proven to be a versatile tool for different use cases. In some of those, message ordering, that messages are consumed in the same order as they are created, is more important than the speed of either consumption or production. There is a big difference between depositing $100 and then withdrawing from it, and the other way around.

|

| This illustration is here to please the eyes rather than any particular purpose |

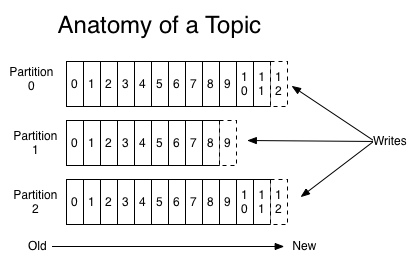

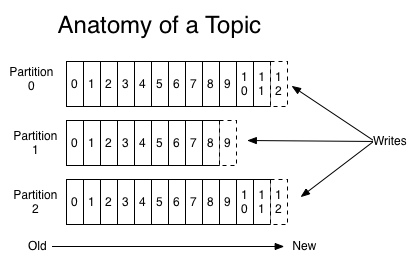

Partition

In Kafka, order can only be guaranteed within a partition. This means that if messages were sent from the producer in a specific order, the broken will write them to a partition and all consumers will read from that in the same order. So naturally, single-partition topic is easier to enforce ordering compared to its multiple-partition siblings.

But sometimes, this setting is not desirable. Having a single partition limits the throughput speed to that of a single consumer. And in most of systems, an enforcement on global order of all messages ever created is unnecessary. The order of depositing and withdrawing of my account shouldn't interfere that of my colleagues (non-causal). In these cases, as long as relevant messages are sent to the same partition, message (causal) ordering is still guaranteed. In Kafka, this is achieved using keyed messages. Kafka partitioners would hash keys and ensure a key always go to the same partition given the number of partitions is not changed.

If the naive hash scheme skews the size of your partitions, e.g. a ADHD bitcoin broker with a disproportionately number of activities making his partition twice as large as anything else, Kafka allows custom partitioner.

Configuration

Kafka numerous settings include `retries` indicates the number of time a message will be retried on encountering intermittent error, and `max.in.flight.requests.per.connection`, the number of unacknowledged messages the producer will send on a single connection before blocking (pipelining, not like they are sent concurrently). The combination of these two can cause a bit of trouble. It is hard to build a reliable system without zero `retries`. On the other hand, it is possible that the broker fails the first batch of message, succeeds in the second (already in flight), and then retries the first batch and succeeds this time. With positive `retries`, `max.in.flight.requests.per.connection` should be set to 1. This comes with a penalty on producer throughput and should only be used where order enforcement is a must.

Producer

Sadly, there is no ordering guarantee for messages written by different producers, the order of reception is used. If the system design insists on multiple producers, one topic, and strong ordering, the responsibility falls into the producer application code. This means bigger investment and is the reason why this comes last. As both clock and network in a distributed system are not reliable, sequence numbers can replace timestamp in showing order of messages.

The Lamport timestamp is one of such simple and compact mechanism. Lamport timestamp however cannot tell whether two messages are concurrent or causally dependent. The more heavy-weight

version vectors would gladly take the job with a few extra dimes.

TLDR

Single partition, and producer are easier to maintain total order. On multiple partitions, get causally dependent messages to the same partition using key and partitioner. Independent sequence ordering is needed with multiple producers. Accept the tradeoff of not having message pipelining if you want retries and order in the same place.